“Virtual partner” is a technology that can play with your emotions

New research may be able to combat behavioral disorders using coordination-sensitive technology.

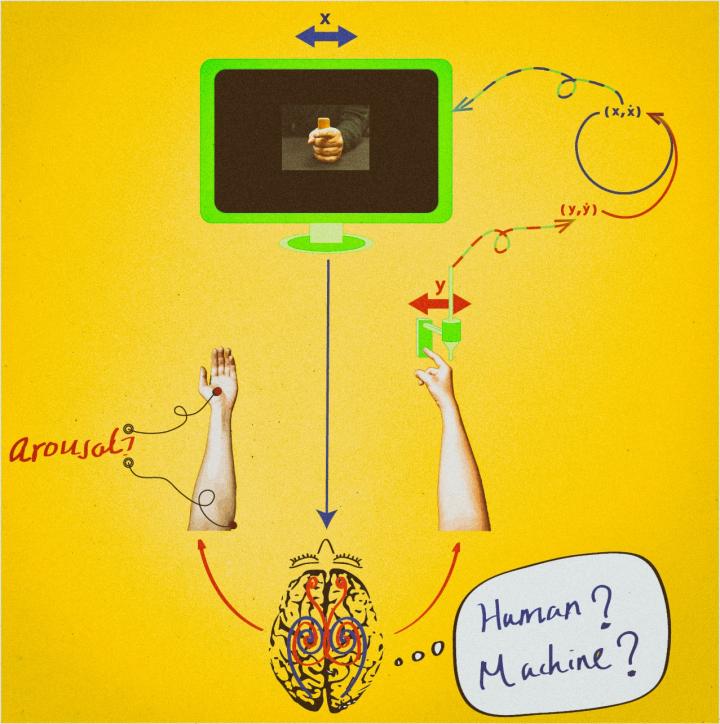

“Virtual Partner” adapts to participants’ movements, making it hard to determine if it is a human or machine behind the monitor. Photo courtesy of Florida Atlantic University.

May 23, 2016

F

lorida Atlantic’s behavioral science department wants to make people mad by giving them the finger — but not the way you think.

A new research study done at the Center for Complete Systems and Brain Sciences at FAU focused on a machine’s capability to emotionally interact with another human. They succeeded and created a machine called a “virtual partner.”

The virtual partner is a moving on-screen image that monitors participant input — in this case a finger moving back and forth — and adjusts what is shown on screen based on what the participant is doing. The technology is actively adapting to what it is seeing, much like a human, and in this case has been programmed to frustrate the participant.

“We usually get responses like, ‘this thing is definitely messing with me,’” Emmanuelle Tognoli, Ph.D. and co-author of the experiment, said.

The goal of this project was to prove that a machine can actively interact with a human just as two people interact with each other, and, according to the researchers, many participants believed that the virtual partner was being run by a human — not algorithms.

The mathematical algorithms of behavior are products of four decades of research on the subject led by J.A. Scott Kelso, Ph.D. who founded the university’s CCSBS, according to EurekAlert, a global website that offers science news.

The study proved that machines can hold their own as partners in a behavioral exchange, and could be useful in a rehabilitation scenario for individuals who struggle with social interaction and expression.

“We continue to develop this research to expand the range of movement that our technology can sympathize with,” Tognoli said.

Right now the technology can only interact based on one simple finger movement, but Tognoli said that simple base movement of the finger would give way to more complex movements and gestures.

“Many debilitating diseases, like autism and schizophrenia, two examples, can be helped by refining social behavior and response through a program called ‘virtual teacher,’” Tognoli explained. “Small interactions are repetitively ingrained into the subject as they interact with the virtual teacher and improve.”

Tognoli also said that other researchers from different universities have already begun to take this technology and attempt to expand the range of motion it is capable of replicating, ultimately moving toward a program with access to the full spectrum of human gestures.

According to Guillaume Dumas, Ph.D., co-author and a former member of FAU’s CCSBS, artificial intelligence development has been focused on an “algorithmic approach of human cognition,” but now there is a focus on developing the social and emotional aspects of AI.

The research team hopes that the applications of virtual partner will grow and that it will become a useful rehabilitation technology for patients with limited social and emotional capability.

Tucker Berardi is a staff writer for the University Press. For information regarding this or other stories, email [email protected] or tweet him @tucker_berardi.